coordinator: riccardo.costanzi@unipi.it

The topic Thrustworthy Artificial and Embodied Intelligence (TAEI) has been identified as one of the four frontier research lines underlying the so-called Industry 5.0 – the industrial paradigm of the future centred on humans (unlike approaches pursued so far, that put the productive process at the centre with the aim of optimizing it – e.g., Industry 4.0) and intrinsically characterized by sustainability.

The TAEI line carries out research activity to enable the systems of the future to integrate in a pervasive and reliable way various forms of intelligence.

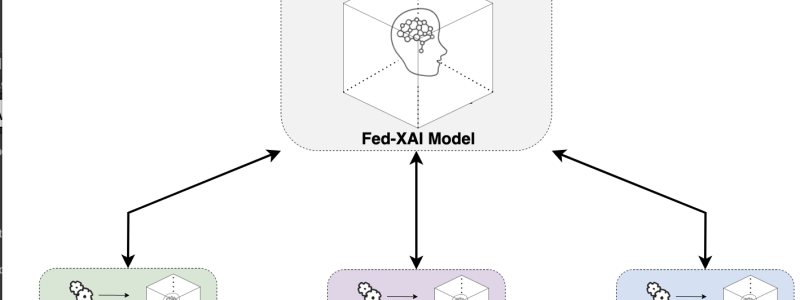

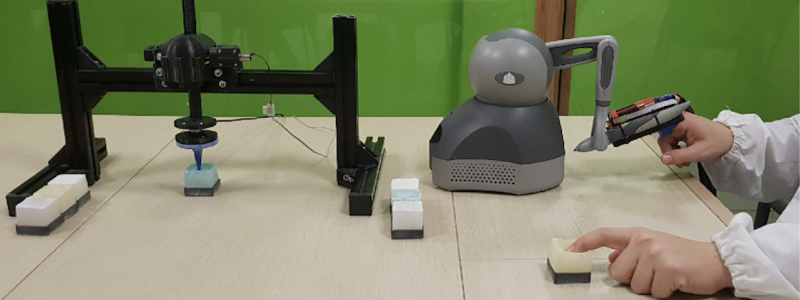

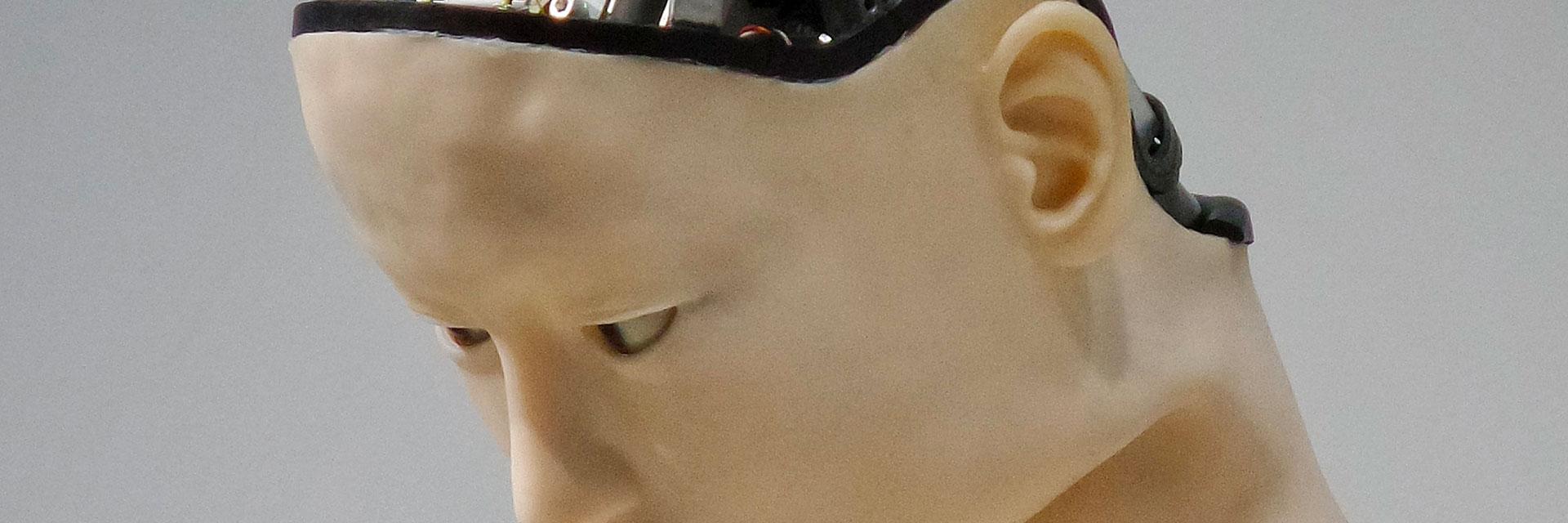

To this aim, Artificial Intelligence (AI) methodologies developed within the framework of TAEI research line are designed and developed to be explainable (for the human), while simultaneously preserving the privacy of the data that are necessary for the training of the models. At the same time, Embodied Intelligence (EI), the intrinsic intelligence within the physical body of agents, is developed within the TAEI line as a key enabler to make agents capable of suitably interacting with different kinds of environments. This will allow us to bring intelligent autonomous agents (e.g. robots) at a higher level within industry but also to bring them outside industry, in the natural environments, which are usually more complex as they are unstructured and likely complex for human exploration (examples are: space, underwater, aerial or remote ground habitats).

Agents of the future, regardless of the scenario or the environment where they will need to act, need to synthesize, by design, Trustworthy Artificial and Embodied Intelligence.

Because of its nature, research carried out within the TAEI line has a wide spectrum impact, ranging among:

- Robotics in industrial environment;

- Autonomous exploration of unknown, unstructured, potentially hostile, environments;

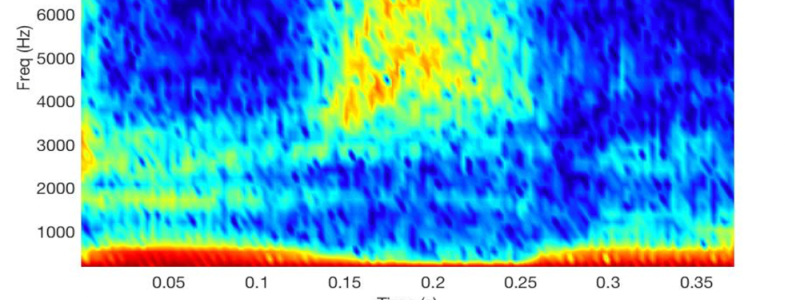

- AI tools for healthcare and bioprinting;

- AI for the networks of the future (5G, 6G);

- Social Media Safety.

La linea TAEI svolge attività di ricerca per consentire ai sistemi del futuro di integrare in modo pervasivo e affidabile diverse forme di intelligenza.

A questo scopo, le metodologie di Intelligenza Artificiale (AI) sviluppate nell'amito della linea di ricerca TAEI sono progettate e sviluppate per essere spiegabili (per l'uomo), preservando contemporaneamente la privacy dei dati necessari per l'addestramento dei modelli. Allo stesso tempo, l’Intelligenza Incorporata (del corpo - EI), l’intelligenza intrinseca all’interno del corpo fisico degli agenti, viene sviluppata all’interno della linea TAEI come un fattore chiave per rendere gli agenti capaci di interagire adeguatamente con diversi tipi di ambienti. Ciò ci consentirà di portare gli agenti autonomi intelligenti (ad esempio i robot) a un livello più elevato all’interno dell’industria, ma anche di portarli al di fuori dell’industria, negli ambienti naturali, che sono generalmente più complessi per l’esplorazione umana in quanto di solito non strutturati (es. alcuni esempi sono: habitat spaziali, sottomarini, aerei o terrestri remoti). Per gli agenti del futuro, indipendentemente dallo scenario o dall’ambiente in cui dovranno agire, sarà necessario sintetizzare, in fase di progettazione, l’intelligenza artificiale affidabile e l’intelligenza del corpo.